Important Note: This article is part of the series in which TechReport.us discuss theory of Video Stream Matching.

2.9 What is a Video

Video (Latin for “I see”, first person singular present, indicative of videre, “to see”) is the technology of electronically capturing, recording, processing, storing, transmitting, and reconstructing a sequence of still images representing scenes in motion. Video technology was first developed for television systems, but has been further developed in many formats to allow for consumer video recording. Video can also be viewed through the Internet as video clips or streaming media clips on computer monitors.[23]

Video is different from film, which captures a moving image as a sequence of still pictures photographically. There are different formats of videos are now a days available. Here discussing only AVI because this used in VSM system. Other formats are Mpeg, MP4 and VOB.[19]

2.9.1 – Characteristics of video streams

2.9.1.1 Number of Frames Per Second

Frame rate, the number of still pictures per unit of time of video, ranges from six or eight frames per second (fps) for old mechanical cameras to 120 or more frames per second for new professional cameras. PAL (Europe, Asia, Australia, etc.) and SECAM (France, Russia, parts of Africa etc.) standards specify 25 fps, while NTSC (USA, Canada, Japan, etc.) specifies 29.97 fps. Film is shot at the slower frame rate of 24fps, which complicates slightly the process of transferring a cinematic motion picture to video. To achieve the illusion of a moving image, the minimum frame rate is about ten frames per second.[24]

2.9.1.2 Bit Rate (Digital Only)

Bit rate is a measure of the rate of information content in a video stream. It is quantified using the bit per second (bit/s) unit or Megabits per second (Mbit/s). A higher bit rate allows better video quality. For example VideoCD, with a bit rate of about 1 Mbit/s, is lower quality than DVD, with a bit rate of about 5 Mbit/s. HDTV has a still higher quality, with a bit rate of about 20 Mbit/s.

Variable bit rate (VBR) is a strategy to maximize the visual video quality and minimize the bit rate. On fast motion scenes, a variable bit rate uses more bits than it does on slow motion scenes of similar duration yet achieves a consistent visual quality. For real-time and non-buffered video streaming when the available bandwidth is fixed, e.g. in videoconferencing delivered on channels of fixed bandwidth, a constant bit rate (CBR) must be used.

2.9.2 What is an AVI?

AVI stands for Audio Video Interleave. It is a special case of the RIFF (Resource Interchange File Format). AVI is defined by Microsoft. AVI is the most common format for audio/video data on the PC. AVI is an example of a de facto (by fact) standard.[20]

AVI, is a multimedia container format introduced by Microsoft in November 1992 as part of its Video for Windows technology. AVI files can contain both audio and video data in a standard container that allows synchronous audio-with-video playback. Like DVDs, AVI files support multiple streaming audio and video, although these features are seldom used. Most AVI files also use the file format extensions developed by the Matrox OpenDML group in February 1996. These files are supported by Microsoft, and are unofficially called “AVI 2.0”.

AVI is a special case of the Resource Interchange File Format (RIFF), which divides a file’s data into blocks, or “chunks.” Each “chunk” is identified by a FourCC tag. An AVI file takes the form of a single chunk in an RIFF formatted file, which is then subdivided into two mandatory “chunks” and one optional “chunk”. The entire structure of a RIFF file was apparently copied from an earlier IFF format devised by Electronic Arts in the mid-1980s, the primary difference being the “endianness” of integers used, between the current .avi (see also UTF-8, UTF-16, and UTF-32, for more about this) and the original FourCC format. In fact, even a properly written IFF parser for the now old-aged AmigaOS, (after correcting for endianness) should parse RIFF files just fine.

The first sub-chunk is identified by the “hdrl” tag. This sub-chunk is the file header and contains metadata about the video, such as its width, height and frame rate. The second sub-chunk is identified by the “movi” tag. This chunk contains the actual audio/visual data that make up the AVI movie. The third optional sub-chunk is identified by the “idx1” tag which indexes the physical addresses [within the file] of the data chunks.

By way of the RIFF format, the audio/visual data contained in the “movi” chunk can be encoded or decoded by software called a codec (which is an abbreviation for coder-decoder, but in reality is actually a translation scheme). Upon creation of the file, the codec translates between raw data and the (compressed) data format used inside the chunk. An AVI file may therefore carry audio/visual data inside the chunks in virtually any compression scheme, including Full Frame (Uncompressed), Intel Real Time (Indeo), Cinepak, Motion JPEG, Editable MPEG, VDOWave, ClearVideo / RealVideo, QPEG, MPEG-4 Video, et al.

2.10 What is BIT MAP (BMP) ?

The .bmp file format (sometimes also saved as .dib) is the standard for a Windows 3.0 or later DIB(device independent bitmap) file. It may use compression (though I never came across a compressed .bmp-file) and is (by itself) not capable of storing animation. However, you can animate a bitmap using different methods but you have to write the code which performs the animation. There are different ways to compress a .bmp-file, but I won’t explain them here because they are so rarely used. The image data itself can either contain pointers to entries in a color table or literal RGB values.[21, 22]

2.10.1 Basic Structure

A .bmp file contains of the following data structures:

BITMAPFILEHEADER bmfh;

BITMAPINFOHEADER bmih;

RGBQUAD aColors[];

BYTE aBitmapBits[];

bmfh contains some information about the bitmap file (about the file, not about the bitmap itself). bmih contains information about the bitmap such as size, colors,… The aColors array contains a color table. The rest is the image data, which format is specified by the bmih structure.

2.10.2 Exact Structure

The following tables give exact information about the data structures and also contain the settings for a bitmap with the following dimensions: size 100×100, 256 colors, no compression. The start-value is the position of the byte in the file at which the explained data element of the structure starts, the size-value contains the nuber of bytes used by this data element, the name-value is the name assigned to this data element by the Microsoft API documentation. Stdvalue stands for standard value. There actually is no such a thing as a standard value but this is the value Paint assigns to the data element if using the bitmap dimensions specified above (100x100x256). The meaning-column gives a short explanation of the purpose of this data element.

2.10.3 – The BITMAPFILEHEADER

| start | size | name | stdvalue | purpose |

| 1 | 2 | bfType | 19778 | must always be set to ‘BM’ to declare that this is a .bmp-file. |

| 3 | 4 | bfSize | ?? | specifies the size of the file in bytes. |

| 7 | 2 | bfReserved1 | 0 | must always be set to zero. |

| 9 | 2 | bfReserved2 | 0 | must always be set to zero. |

| 11 | 4 | bfOffBits | 1078 | specifies the offset from the beginning of the file to the bitmap data. |

Figure 2.16 File Header

2.10.4 – The BITMAPINFOHEADER

| start | size | name | stdvalue | purpose |

| 15 | 4 | biSize | 40 | specifies the size of the BITMAPINFOHEADER structure, in bytes. |

| 19 | 4 | biWidth | 100 | specifies the width of the image, in pixels. |

| 23 | 4 | biHeight | 100 | specifies the height of the image, in pixels. |

| 27 | 2 | biPlanes | 1 | specifies the number of planes of the target device, must be set to zero. |

| 29 | 2 | biBitCount | 8 | specifies the number of bits per pixel. |

| 31 | 4 | biCompression | 0 | Specifies the type of compression, usually set to zero (no compression). |

| 35 | 4 | biSizeImage | 0 | specifies the size of the image data, in bytes. If there is no compression, it is valid to set this member to zero. |

| 39 | 4 | biXPelsPerMeter | 0 | specifies the the horizontal pixels per meter on the designated targer device, usually set to zero. |

| 43 | 4 | biYPelsPerMeter | 0 | specifies the the vertical pixels per meter on the designated targer device, usually set to zero. |

| 47 | 4 | biClrUsed | 0 | specifies the number of colors used in the bitmap, if set to zero the number of colors is calculated using the biBitCount member. |

| 51 | 4 | biClrImportant | 0 | specifies the number of color that are ‘important’ for the bitmap, if set to zero, all colors are important. |

Figure 2.17 Info Header

2.10.5 – The RGBQUAD Array

The following table shows a single RGBQUAD structure:

| start | size | name | purpose |

| 1 | 1 | rgbBlue | specifies the blue part of the color. |

| 2 | 1 | rgbGreen | specifies the green part of the color. |

| 3 | 1 | rgbRed | specifies the red part of the color. |

| 4 | 1 | rgbReserved | must always be set to zero. |

Figure 2.18 RGB Table

2.10.6 – The Pixel Data:

It depens on the BITMAPINFOHEADER structure how the pixel data is to be interpreted (see above).[21,22]

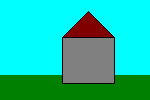

It is important to know that the rows of a DIB are stored upside down. That means that the uppest row which appears on the screen actually is the lowest row stored in the bitmap, a short example:

| pixels stored in .bmp-file | |

| pixels displayed on the screen |

Figure2.19 Bit Map Storing Mechanism

Figure2.19 Bit Map Storing Mechanism

You do not need to turn around the rows manually. The API functions which also display the bitmap will do that for you automatically.

Another important thing is that the

number of bytes in one row must always be adjusted to fit into the

border of a multiple of four. You simply append zero bytes until the

number of bytes in a row reaches a multiple of four, an example:

| 6 bytes that represent a row in the bitmap: | A0 37 F2 8B 31 C4 |

| must be saved as: | A0 37 F2 8B 31 C4 00 00 |

Figure2.20 Bytes

to reach the multiple of four which is the next higher after six (eight). If you keep these few rules in mind while working with .bmp files it should be easy for you, to master it.

2.11 What is key Frames?

In video key frames are the drawings which are essential to define a movement. They are called “frames” because their position in time is measured in frames on a strip of film. A sequence of key frames defines which movement the spectator will see, whereas the position of the key frames on the film (or video) defines the timing of the movement. Because only two or three key frames over the span of a second don’t create the illusion of movement, the remaining frames are filled with more drawings, called “inbetweens“.

Here we using key frames for better performance. It is very critical for this system. Without accurate finding of key frames accurate decision making is not possible. As much system able to get good key frame as much good result system will provide.

Actually in this report key frame is the mean value of many other frames. This is the class of frames which is representing by single frame. This frame now called mean frame.[17]

2.12 – What is Feature Extraction?

Feature extraction involves simplifying the amount of resources required to describe a large set of data accurately. When performing analysis of complex data one of the major problems stems from the number of variables involved. Analysis with a large number of variables generally requires a large amount of memory and computation power or a classification algorithm which over fits the training sample and generalizes poorly to new samples. Feature extraction is a general term for methods of constructing combinations of the variables to get around these problems while still describing the data with sufficient accuracy.[18]