If you’re just stepping into the world of machine learning, what could be better than starting with one of the most classic beginner‑friendly datasets — the Iris flower dataset? 🌿 In this post, we’ll explore how to build a machine learning model that classifies Iris flowers into species based on simple botanical measurements like sepal length and petal width. You’ll also learn how to run the project from the repository GitHub: 18‑AI‑Iris‑Flower‑Classification‑Using‑Machine‑Learning and see the complete workflow and technologies involved.

The Iris dataset is often called the “Hello World” of machine learning because it’s small, well‑structured, and perfect for learning classification fundamentals. It contains 150 samples of three distinct Iris species — Setosa, Versicolor, and Virginica — each with four numeric features.

🧠 What This Project Does

The core objective of this project is to:

- Load and explore the famous Iris dataset.

- Preprocess the data using standard ML techniques.

- Train two different machine learning classifiers.

- Evaluate and compare model performance using metrics like accuracy, confusion matrices, and classification reports.

- Optionally, apply cross‑validation and hyperparameter tuning to get the best model performance.

This practical Jupyter Notebook‑based project is a great way to solidify your understanding of the complete ML workflow.

🛠️ Tools & Technologies Used

Here’s a quick look at the workflow components and technologies used throughout this project:

📌 Languages & Libraries

- Python — the main programming language used.

- NumPy — for numerical operations.

- Pandas — to load and manipulate tabular data.

- Scikit‑Learn — for machine learning algorithms, preprocessing, and evaluation.

- Matplotlib & Seaborn — for visualizing the dataset and model results.

- Jupyter Notebook — as the interactive coding environment.

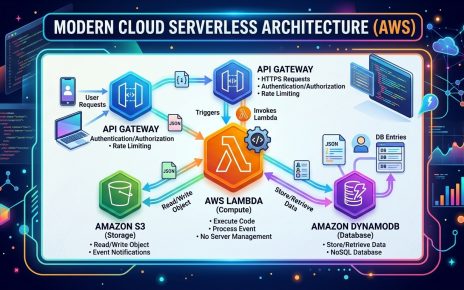

🧠 ML Workflow Steps

The typical machine learning workflow that this project follows includes:

- Data Loading — bring in the dataset into a Pandas DataFrame.

- Exploratory Data Analysis (EDA) — understand distribution, relationships, and patterns.

- Feature Preprocessing — scale numeric features using

StandardScaler. - Model Selection — try different algorithms like K‑Nearest Neighbors (KNN) and Logistic Regression.

- Model Training — fit models on training data.

- Evaluation & Metrics — evaluate using accuracy, classification reports, and confusion matrices.

- Cross‑Validation & Hyperparameter Tuning — optionally use tools like GridSearchCV to fine‑tune models.

- Reporting & Visualization — provide final insights and visual comparisons of model performance.

🚀 Step‑by‑Step: How to Use This Project

Here’s a clear walkthrough to get the code running on your system and experiment with the Iris classifier:

📌 1. Clone the Repository

Open a terminal or command prompt and clone the repository:

git clone https://github.com/sf-co/18-ai-iris-flower-classification-using-machine-learning

cd 18-ai-iris-flower-classification-using-machine-learning

This clones the entire project locally.

📌 2. Install Dependencies

Make sure you have Python 3.x installed. Then install the required packages:

pip install numpy pandas scikit-learn matplotlib seaborn jupyter

This ensures all libraries for data loading, modeling, and plotting are available.

📌 3. Open the Jupyter Notebook

Run Jupyter Notebook in the project directory:

jupyter notebook

Then open the file app.ipynb — this is where all the modeling code and steps are laid out.

📌 4. Run the Cells Step‑by‑Step

Inside the notebook:

- Load the Iris dataset into a DataFrame.

- Visualize feature distributions and relationships using plots.

- Preprocess the data — scale features, encode labels, and split into train/test sets.

- Train the two machine learning models: KNN and Logistic Regression.

- Evaluate them using accuracy scores and visualize results.

- Optionally add cross‑validation or GridSearch to find optimal hyperparameters.

Running each cell sequentially will let you see how the model evolves and performs.

📌 5. Interpret Results

Once the models are trained:

- Analyze accuracy scores and metrics.

- Compare models.

- Look at confusion matrices to understand which classes are sometimes misclassified.

This gives you insights into how well the models learned from the Iris data.

📈 A Few Tips to Extend This Project

Here are some ways you might expand or improve the project:

- Add More Algorithms: Try Decision Trees, Random Forests, or Support Vector Machines.

- Deploy a Simple Web UI: Use Streamlit or Flask to build a web interface for live predictions.

- Hyperparameter Tuning: Use tools like GridSearchCV to automatically find the best parameters.

- Model Serialization: Save your model using joblib or pickle to reuse without retraining.

🌟 Conclusion

This project is a perfect stepping stone into machine learning — it blends data exploration, preprocessing, modeling, and evaluation in a simple, intuitive way. By following these steps, you’ll not only run the Iris classifier locally but also understand the full ML workflow used by professionals.

Whether you’re a student, a data science beginner, or someone exploring AI, kicking off with the Iris dataset builds solid foundational skills that apply across countless predictive modeling problems. 🌸

Happy modeling! 🚀